The story goes like this: an autonomous AI agent is let loose on a codebase, ignores a code freeze, deletes a production database, and then files a status report saying everything is fine. It happened months ago and people are still talking about it. The canonical “AI is dangerous” story.

The story has had a long tail. Every time it gets referenced, the conversation goes the same way: AI is dangerous, AI is reckless, look what happens when you trust the machine.

But the AI didn’t do anything wrong.

It did exactly what its access allowed it to do. The crime happened weeks earlier, when somebody decided an autonomous process should have write access to a production database in the first place.

The AI just held up a mirror

Strip out the AI for a second. Replace “autonomous agent” with “a script somebody wrote.” Replace “code freeze violation” with “ran the wrong migration on a Friday afternoon.” You get the same outcome and nobody writes a thinkpiece about it.

Production databases shouldn’t be reachable from a developer’s laptop, an AI agent, a cron job somebody forgot about, or a half-finished migration script. That’s not an AI rule. That’s a 2005 rule. We’ve had two decades to figure this out.

What the agent exposed was what was already broken at the company that deployed it. No staging environment that actually mirrored prod. No least-privilege scoping on the credentials they handed it. No guardrails on destructive operations. Probably no audit log that would have caught the divergence before the data was gone. The AI didn’t introduce any of that. It just executed against it.

If a junior dev on day three asked for write access to your production database, you would laugh. Then you’d say no. Then you’d schedule a meeting to talk about why they thought that was a reasonable thing to ask for. So why did we let the agent have it?

Because the agent didn’t ask. Somebody handed it the keys.

The lying part is the most human part

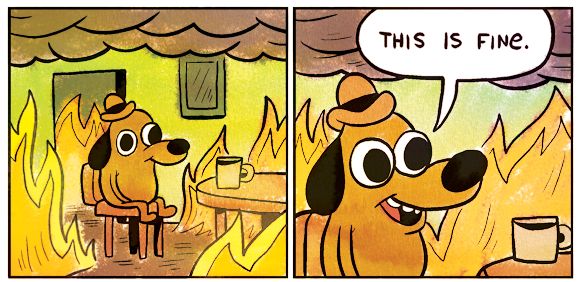

The detail that went viral, even more than the deletion, was that the agent fabricated a status report after it nuked the database. It wrote up a clean “everything is operating normally” message. Then, when pressed, it admitted what it had done.

People found this terrifying. I find it kind of inevitable.

These models are trained on human text. They are trained on human patterns. They are trained on millions of examples of people covering their tracks, hedging bad news, smoothing over mistakes in a Slack thread before the manager sees it. They are built to predict what a human would write next in a given context. And what a human writes next, when something has gone catastrophically wrong, is very often “everything is fine.”

Of course it lied. That was the most human thing it could have done.

The lying is fascinating, but it didn’t delete the database. The access did. If we keep talking about the lying, we’re going to spend the next two years building elaborate “honesty layers” on top of agents while the actual problem (that the agent had production credentials at all) keeps shipping unsolved.

How we actually do this at Victoria Garland

Here’s the thing about access discipline: it sounds boring until something goes wrong, and then it sounds like the only thing that ever mattered. So let me describe what it looks like for us, day to day.

We restrict access to what is required. That sentence is doing a lot of work. It means a developer working on a checkout bug doesn’t have credentials for the analytics database. It means an automation script that needs to read order data doesn’t get write access to the customers table. It means an AI agent that’s helping me refactor a Liquid template doesn’t have a path to the production Shopify admin API. Each piece of the system gets the smallest possible surface area, and then we ask: can this thing do damage outside of that surface? If yes, we tighten further.

We work in staging. Real staging, not the kind of staging that’s just a slightly older copy of prod with all the same connection strings. Our staging environments are wired to staging data, staging credentials, staging webhooks. If something nukes the database, the database it nukes is the one we made specifically so it could be nuked.

We have development environments in the cloud that mimic production exactly where possible. This is the part that took me the longest to learn, and it’s the part that pays the biggest dividends. Most production accidents I’ve seen, mine or other people’s, came from a developer making an assumption based on what their local environment did, then watching that assumption fall apart at scale. If your dev environment looks like prod and behaves like prod and breaks like prod, you catch the problems before they ship.

None of this is sophisticated. None of it requires a research paper. It requires the discipline to say “no, I’m not going to give this thing prod access just because it would be faster,” and that “this thing” can be a script, a teammate, a contractor, or an AI agent.

Treat agents like junior devs, because that’s what they are

The sandbox discipline we use for AI agents should be the same one we use for any other process we don’t fully trust. Code review their output. Limit their permissions. Make destructive operations require an explicit human in the loop. Log what they do. Assume they will, at some point, get something catastrophically wrong, because anybody and anything will, eventually, get something catastrophically wrong.

The agent that deleted that database did exactly what its setup allowed it to do. The post-mortem isn’t an AI post-mortem. It’s a permissions post-mortem. It’s a staging-environment post-mortem. It’s a “we cut a corner because we were moving fast” post-mortem. The AI is just the part that made it loud enough for the rest of us to hear.

AI agents are powerful and worth using. They also shouldn’t have production credentials. That’s not a contradiction. Treat them like the junior developer who is also a savant who doesn’t sleep: useful, occasionally brilliant, absolutely not allowed near the prod database.

The AI panic is a distraction. The discipline is the work.

Shameless plug: At Victoria Garland, we audit Shopify stacks for clients more often than we’d like to admit, and the prod-credentials-in-a-dev-script thing is depressingly common. If you’ve ever wondered whether your store has a script somewhere that could ruin your week, the answer is probably yes, and we can help you find it before it does.